Why Most AI Projects Stall After the Demo

AI demos are compelling. The model works, the outputs are impressive, and the possibilities feel immediate.

But a successful demo is not the same as a production system. Many AI initiatives lose momentum not because the technology fails, but because the operational gap is larger than expected.

Closing that gap is what turns excitement into sustained impact.

The Demo Environment Is Designed for Success

Demos are controlled by design. The data is clean. The workflow is simplified. Edge cases are limited. Stakeholders see the best-case scenario.

Production environments introduce a different reality:

Messy, inconsistent data

Cross-system dependencies

Security and compliance requirements

Real performance expectations

Accountability for outcomes

An AI system that performs well in isolation must now function inside a broader operational framework. Without that framework, progress slows.

Where Momentum Breaks

Most projects stall at the same transition point: moving from proof-of-concept to operational integration.

The model may be ready, but key questions remain unanswered:

- Who owns the outputs?

- How are decisions audited?

- What happens when the agent encounters an exception?

- How are workflows updated safely over time?

When these questions aren’t addressed early, teams hesitate to expand deployment. The result is a promising pilot that never becomes infrastructure.

The Operational Gap

The gap between demo and production isn’t about intelligence. It’s about workflow design.

Production systems require:

Clear ownership

Defined escalation paths

Embedded governance

Monitoring and performance tracking

Version control and documentation

AI agents thrive when supported by structured workflows. Without structure, even powerful models remain isolated tools.

Workflow Automation Is the Bridge

This is where workflow automation becomes essential.

Instead of treating an AI agent as a standalone capability, leading teams embed agents into orchestrated workflows that:

Connect multiple systems

Apply role-based permissions

Trigger downstream actions

Track performance and outcomes

When AI is part of a coordinated workflow, it becomes dependable and scalable. Execution and intelligence work together.

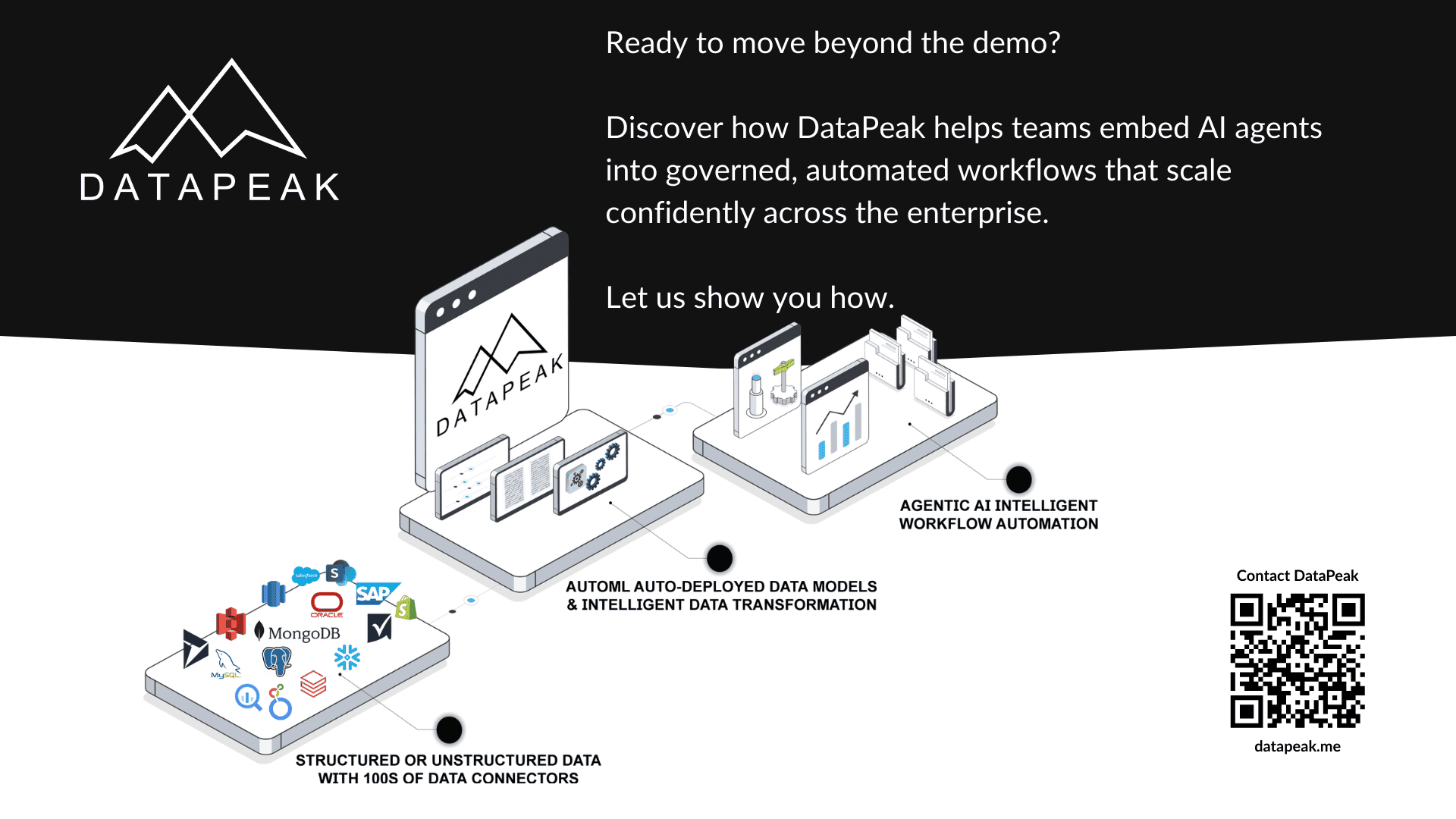

Closing the Gap with DataPeak

Teams using DataPeak approach the transition deliberately.

An AI agent might begin as a demo that categorizes incoming support tickets. Within DataPeak, that capability can evolve into a production workflow that:

Applies governance controls automatically

Routes tickets across departments

Logs every decision for auditability

Monitors exception rates in real time

Allows safe updates through versioned workflows

Because workflow automation, monitoring, and governance are built into the platform, teams can scale confidently. The AI agent doesn’t just impress in a demo — it operates reliably within daily business processes.

This is how organizations move from experimentation to operational AI.

Sustaining AI Momentum

The difference between stalled projects and scaled systems is operational maturity.

Teams that succeed treat AI initiatives as infrastructure from the beginning. They design workflows, embed governance, and establish monitoring early. That preparation reduces friction when it’s time to expand deployment.

AI momentum doesn’t disappear because the technology underperforms. It slows when operational foundations aren’t in place.